We deployed 100 reinforcement studying (RL)-controlled vehicles into rush-hour freeway site visitors to clean congestion and scale back gasoline consumption for everybody. Our aim is to deal with “stop-and-go” waves, these irritating slowdowns and speedups that often don’t have any clear trigger however result in congestion and important power waste. To coach environment friendly flow-smoothing controllers, we constructed quick, data-driven simulations that RL brokers work together with, studying to maximise power effectivity whereas sustaining throughput and working safely round human drivers.

General, a small proportion of well-controlled autonomous autos (AVs) is sufficient to considerably enhance site visitors move and gasoline effectivity for all drivers on the highway. Furthermore, the skilled controllers are designed to be deployable on most trendy autos, working in a decentralized method and counting on normal radar sensors. In our newest paper, we discover the challenges of deploying RL controllers on a large-scale, from simulation to the sector, throughout this 100-car experiment.

The challenges of phantom jams

A stop-and-go wave shifting backwards by way of freeway site visitors.

For those who drive, you’ve certainly skilled the frustration of stop-and-go waves, these seemingly inexplicable site visitors slowdowns that seem out of nowhere after which all of the sudden clear up. These waves are sometimes brought on by small fluctuations in our driving conduct that get amplified by way of the move of site visitors. We naturally modify our pace primarily based on the automobile in entrance of us. If the hole opens, we pace as much as sustain. In the event that they brake, we additionally decelerate. However as a result of our nonzero response time, we’d brake only a bit tougher than the automobile in entrance. The subsequent driver behind us does the identical, and this retains amplifying. Over time, what began as an insignificant slowdown turns right into a full cease additional again in site visitors. These waves transfer backward by way of the site visitors stream, resulting in important drops in power effectivity as a result of frequent accelerations, accompanied by elevated CO2 emissions and accident threat.

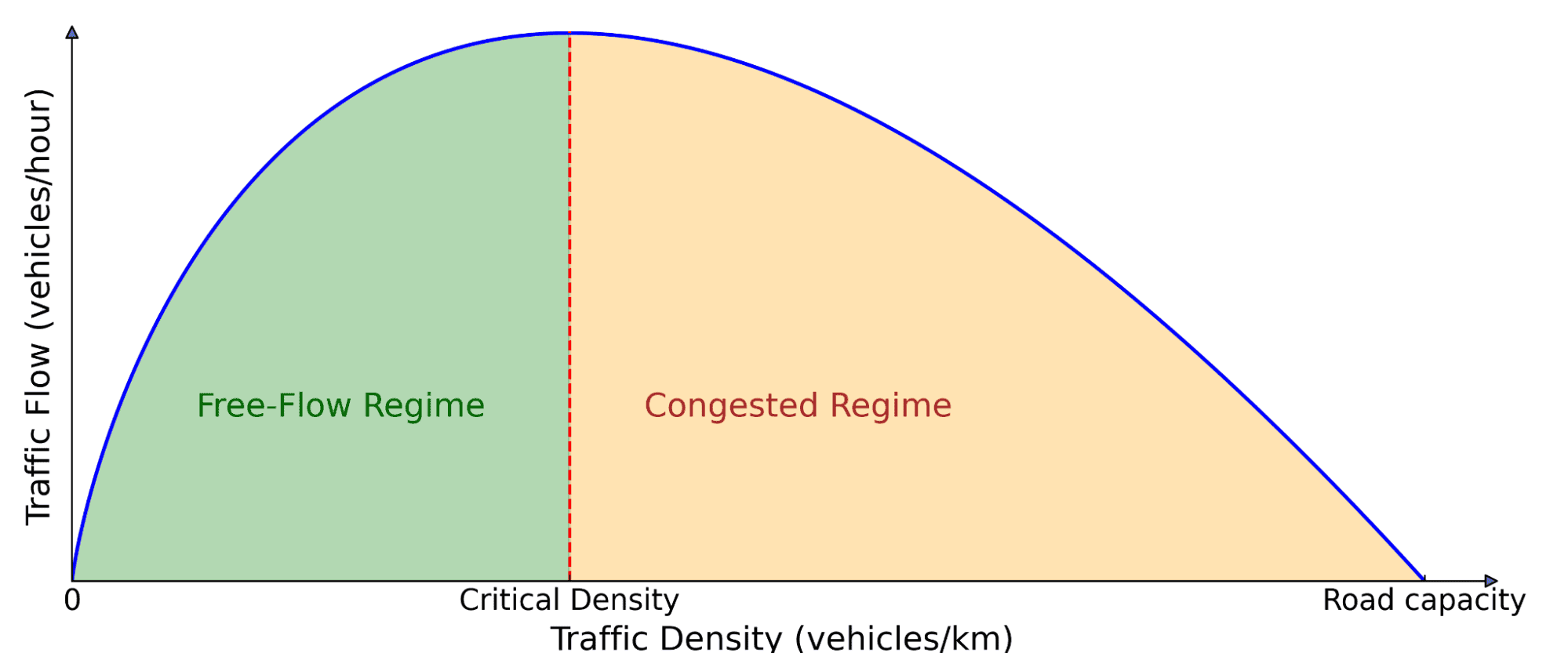

And this isn’t an remoted phenomenon! These waves are ubiquitous on busy roads when the site visitors density exceeds a important threshold. So how can we deal with this drawback? Conventional approaches like ramp metering and variable pace limits try to handle site visitors move, however they usually require pricey infrastructure and centralized coordination. A extra scalable strategy is to make use of AVs, which may dynamically modify their driving conduct in real-time. Nonetheless, merely inserting AVs amongst human drivers isn’t sufficient: they have to additionally drive in a better method that makes site visitors higher for everybody, which is the place RL is available in.

Basic diagram of site visitors move. The variety of vehicles on the highway (density) impacts how a lot site visitors is shifting ahead (move). At low density, including extra vehicles will increase move as a result of extra autos can move by way of. However past a important threshold, vehicles begin blocking one another, resulting in congestion, the place including extra vehicles truly slows down general motion.

Reinforcement studying for wave-smoothing AVs

RL is a strong management strategy the place an agent learns to maximise a reward sign by way of interactions with an atmosphere. The agent collects expertise by way of trial and error, learns from its errors, and improves over time. In our case, the atmosphere is a mixed-autonomy site visitors state of affairs, the place AVs study driving methods to dampen stop-and-go waves and scale back gasoline consumption for each themselves and close by human-driven autos.

Coaching these RL brokers requires quick simulations with real looking site visitors dynamics that may replicate freeway stop-and-go conduct. To realize this, we leveraged experimental information collected on Interstate 24 (I-24) close to Nashville, Tennessee, and used it to construct simulations the place autos replay freeway trajectories, creating unstable site visitors that AVs driving behind them study to clean out.

Simulation replaying a freeway trajectory that reveals a number of stop-and-go waves.

We designed the AVs with deployment in thoughts, making certain that they will function utilizing solely primary sensor details about themselves and the automobile in entrance. The observations include the AV’s pace, the pace of the main automobile, and the house hole between them. Given these inputs, the RL agent then prescribes both an instantaneous acceleration or a desired pace for the AV. The important thing benefit of utilizing solely these native measurements is that the RL controllers will be deployed on most trendy autos in a decentralized method, with out requiring extra infrastructure.

Reward design

Essentially the most difficult half is designing a reward perform that, when maximized, aligns with the completely different aims that we need the AVs to realize:

- Wave smoothing: Scale back stop-and-go oscillations.

- Vitality effectivity: Decrease gasoline consumption for all autos, not simply AVs.

- Security: Guarantee cheap following distances and keep away from abrupt braking.

- Driving consolation: Keep away from aggressive accelerations and decelerations.

- Adherence to human driving norms: Guarantee a “regular” driving conduct that doesn’t make surrounding drivers uncomfortable.

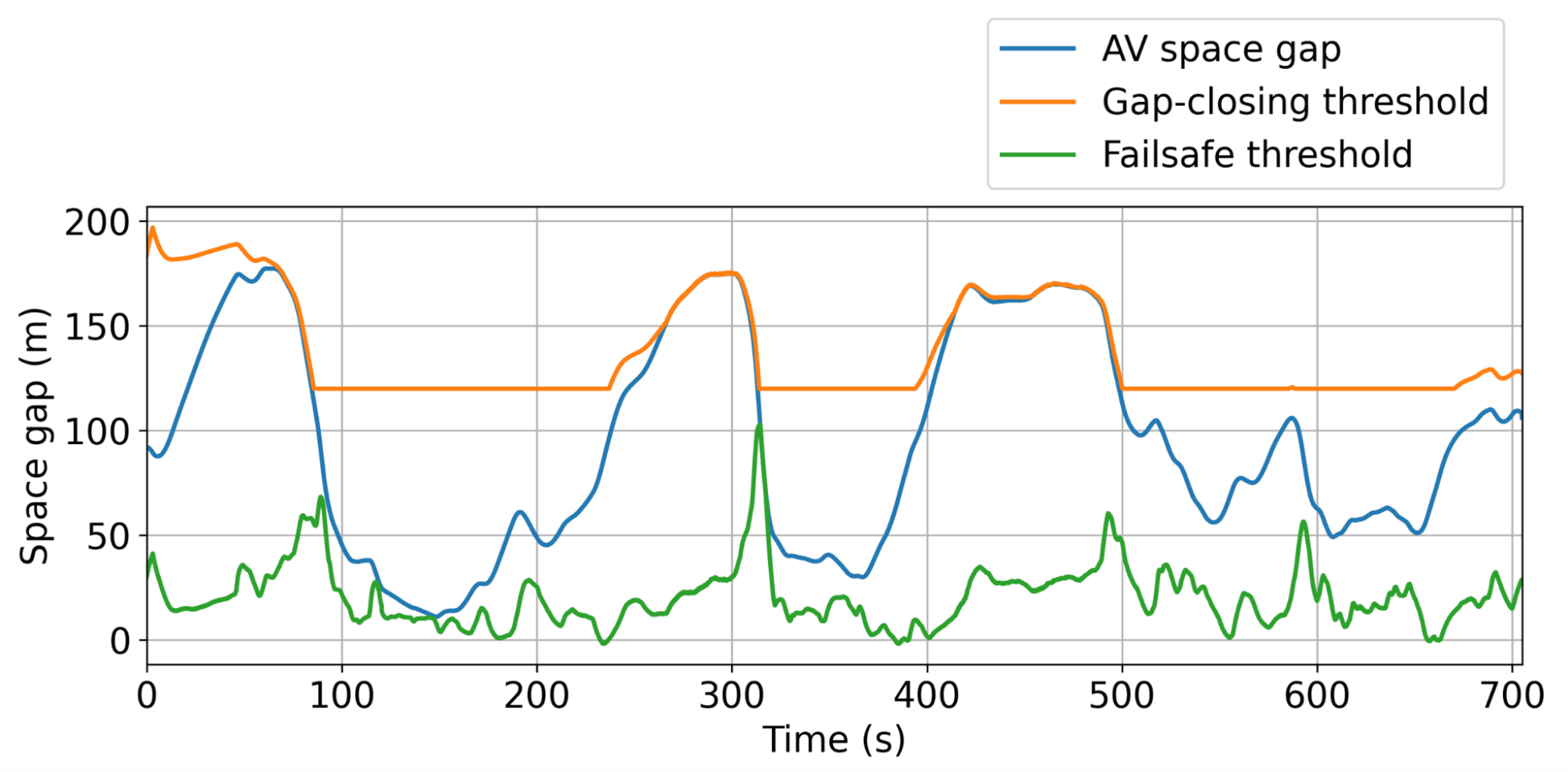

Balancing these aims collectively is tough, as appropriate coefficients for every time period should be discovered. As an illustration, if minimizing gasoline consumption dominates the reward, RL AVs study to return to a cease in the midst of the freeway as a result of that’s power optimum. To stop this, we launched dynamic minimal and most hole thresholds to make sure secure and cheap conduct whereas optimizing gasoline effectivity. We additionally penalized the gasoline consumption of human-driven autos behind the AV to discourage it from studying a egocentric conduct that optimizes power financial savings for the AV on the expense of surrounding site visitors. General, we purpose to strike a steadiness between power financial savings and having an inexpensive and secure driving conduct.

Simulation outcomes

Illustration of the dynamic minimal and most hole thresholds, inside which the AV can function freely to clean site visitors as effectively as doable.

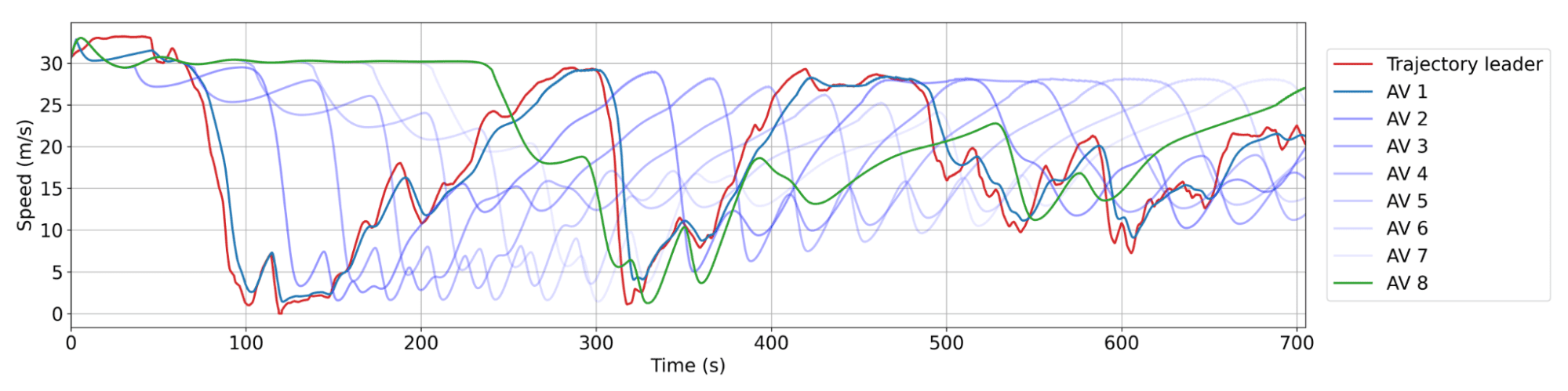

The everyday conduct realized by the AVs is to keep up barely bigger gaps than human drivers, permitting them to soak up upcoming, probably abrupt, site visitors slowdowns extra successfully. In simulation, this strategy resulted in important gasoline financial savings of as much as 20% throughout all highway customers in essentially the most congested situations, with fewer than 5% of AVs on the highway. And these AVs don’t should be particular autos! They will merely be normal client vehicles geared up with a sensible adaptive cruise management (ACC), which is what we examined at scale.

Smoothing conduct of RL AVs. Pink: a human trajectory from the dataset. Blue: successive AVs within the platoon, the place AV 1 is the closest behind the human trajectory. There may be usually between 20 and 25 human autos between AVs. Every AV doesn’t decelerate as a lot or speed up as quick as its chief, resulting in reducing wave amplitude over time and thus power financial savings.

100 AV area check: deploying RL at scale

Our 100 vehicles parked at our operational middle in the course of the experiment week.

Given the promising simulation outcomes, the pure subsequent step was to bridge the hole from simulation to the freeway. We took the skilled RL controllers and deployed them on 100 autos on the I-24 throughout peak site visitors hours over a number of days. This massive-scale experiment, which we known as the MegaVanderTest, is the most important mixed-autonomy traffic-smoothing experiment ever carried out.

Earlier than deploying RL controllers within the area, we skilled and evaluated them extensively in simulation and validated them on the {hardware}. General, the steps in direction of deployment concerned:

- Coaching in data-driven simulations: We used freeway site visitors information from I-24 to create a coaching atmosphere with real looking wave dynamics, then validate the skilled agent’s efficiency and robustness in a wide range of new site visitors situations.

- Deployment on {hardware}: After being validated in robotics software program, the skilled controller is uploaded onto the automobile and is ready to management the set pace of the automobile. We function by way of the automobile’s on-board cruise management, which acts as a lower-level security controller.

- Modular management framework: One key problem in the course of the check was not getting access to the main automobile data sensors. To beat this, the RL controller was built-in right into a hierarchical system, the MegaController, which mixes a pace planner information that accounts for downstream site visitors circumstances, with the RL controller as the ultimate choice maker.

- Validation on {hardware}: The RL brokers had been designed to function in an atmosphere the place most autos had been human-driven, requiring sturdy insurance policies that adapt to unpredictable conduct. We confirm this by driving the RL-controlled autos on the highway beneath cautious human supervision, making modifications to the management primarily based on suggestions.

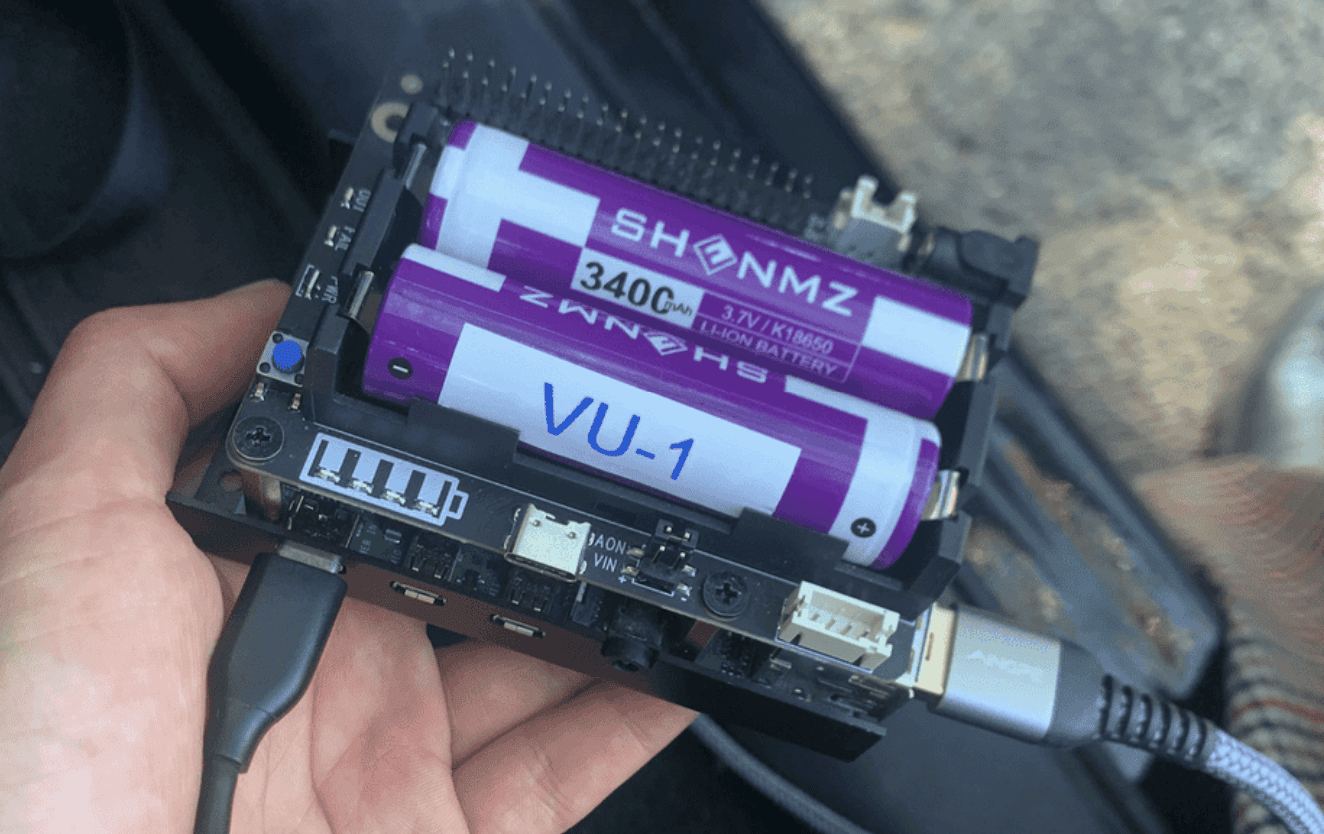

Every of the 100 vehicles is related to a Raspberry Pi, on which the RL controller (a small neural community) is deployed.

The RL controller immediately controls the onboard adaptive cruise management (ACC) system, setting its pace and desired following distance.

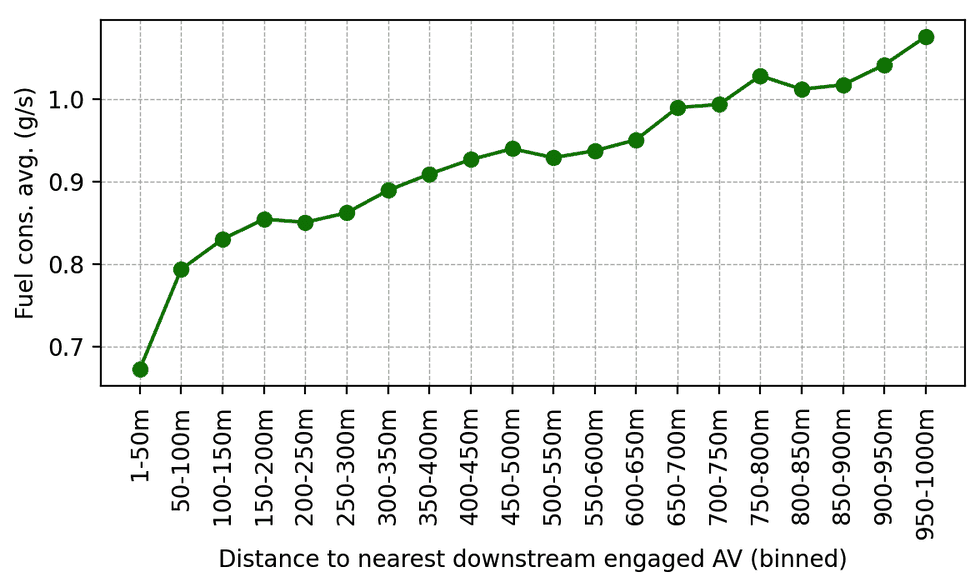

As soon as validated, the RL controllers had been deployed on 100 vehicles and pushed on I-24 throughout morning rush hour. Surrounding site visitors was unaware of the experiment, making certain unbiased driver conduct. Knowledge was collected in the course of the experiment from dozens of overhead cameras positioned alongside the freeway, which led to the extraction of thousands and thousands of particular person automobile trajectories by way of a pc imaginative and prescient pipeline. Metrics computed on these trajectories point out a pattern of lowered gasoline consumption round AVs, as anticipated from simulation outcomes and former smaller validation deployments. As an illustration, we are able to observe that the nearer individuals are driving behind our AVs, the much less gasoline they seem to eat on common (which is calculated utilizing a calibrated power mannequin):

Common gasoline consumption as a perform of distance behind the closest engaged RL-controlled AV within the downstream site visitors. As human drivers get additional away behind AVs, their common gasoline consumption will increase.

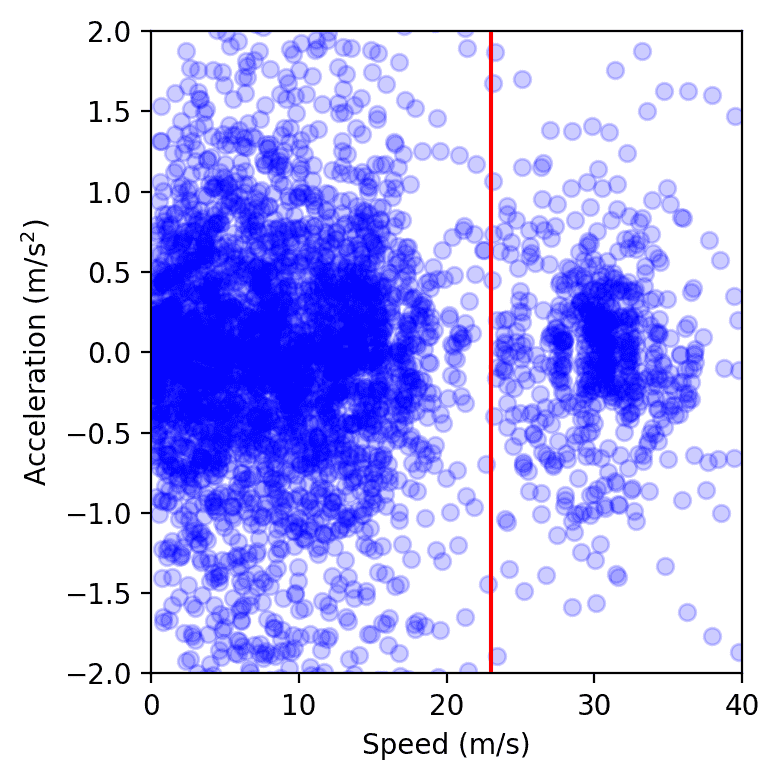

One other method to measure the affect is to measure the variance of the speeds and accelerations: the decrease the variance, the much less amplitude the waves ought to have, which is what we observe from the sector check information. General, though getting exact measurements from a considerable amount of digital camera video information is sophisticated, we observe a pattern of 15 to twenty% of power financial savings round our managed vehicles.

Knowledge factors from all autos on the freeway over a single day of the experiment, plotted in speed-acceleration house. The cluster to the left of the pink line represents congestion, whereas the one on the appropriate corresponds to free move. We observe that the congestion cluster is smaller when AVs are current, as measured by computing the realm of a gentle convex envelope or by becoming a Gaussian kernel.

Remaining ideas

The 100-car area operational check was decentralized, with no express cooperation or communication between AVs, reflective of present autonomy deployment, and bringing us one step nearer to smoother, extra energy-efficient highways. But, there may be nonetheless huge potential for enchancment. Scaling up simulations to be quicker and extra correct with higher human-driving fashions is essential for bridging the simulation-to-reality hole. Equipping AVs with extra site visitors information, whether or not by way of superior sensors or centralized planning, might additional enhance the efficiency of the controllers. As an illustration, whereas multi-agent RL is promising for bettering cooperative management methods, it stays an open query how enabling express communication between AVs over 5G networks might additional enhance stability and additional mitigate stop-and-go waves. Crucially, our controllers combine seamlessly with present adaptive cruise management (ACC) methods, making area deployment possible at scale. The extra autos geared up with sensible traffic-smoothing management, the less waves we’ll see on our roads, that means much less air pollution and gasoline financial savings for everybody!

Many contributors took half in making the MegaVanderTest occur! The total listing is on the market on the CIRCLES challenge web page, together with extra particulars in regards to the challenge.

Learn extra: [paper]