Unlock quicker, environment friendly reasoning with Phi-4-mini-flash-reasoning—optimized for edge, cellular, and real-time purposes.

Cutting-edge structure redefines pace for reasoning fashions

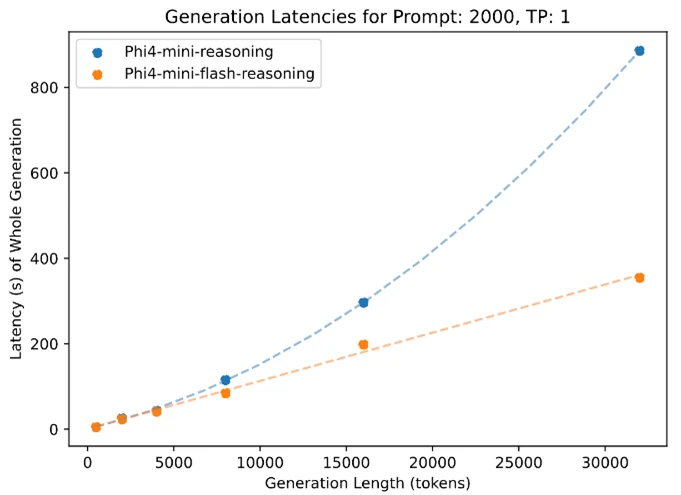

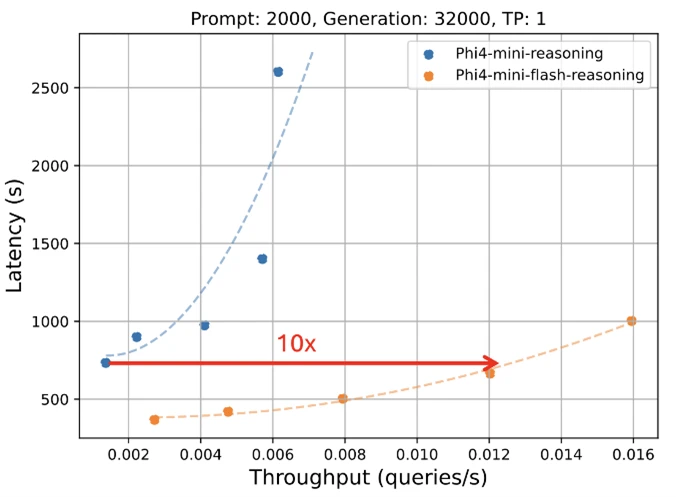

Microsoft is happy to unveil a brand new version to the Phi mannequin household: Phi-4-mini-flash-reasoning. Function-built for eventualities the place compute, reminiscence, and latency are tightly constrained, this new mannequin is engineered to deliver superior reasoning capabilities to edge gadgets, cellular purposes, and different resource-constrained environments. This new mannequin follows Phi-4-mini, however is constructed on a brand new hybrid structure, that achieves as much as 10 instances greater throughput and a 2 to three instances common discount in latency, enabling considerably quicker inference with out sacrificing reasoning efficiency. Able to energy actual world options that demand effectivity and adaptability, Phi-4-mini-flash-reasoning is accessible on Azure AI Foundry, NVIDIA API Catalog, and Hugging Face at the moment.

Effectivity with out compromise

Phi-4-mini-flash-reasoning balances math reasoning capability with effectivity, making it doubtlessly appropriate for instructional purposes, real-time logic-based purposes, and extra.

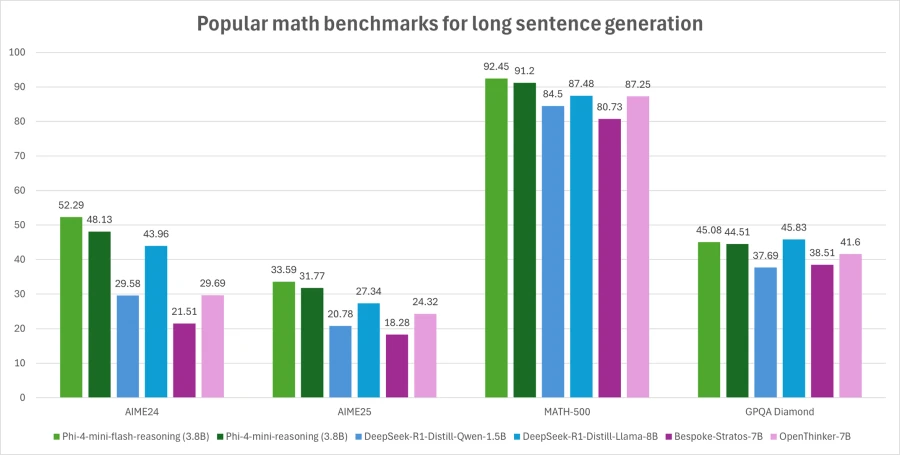

Much like its predecessor, Phi-4-mini-flash-reasoning is a 3.8 billion parameter open mannequin optimized for superior math reasoning. It helps a 64K token context size and is fine-tuned on high-quality artificial information to ship dependable, logic-intensive efficiency deployment.

What’s new?

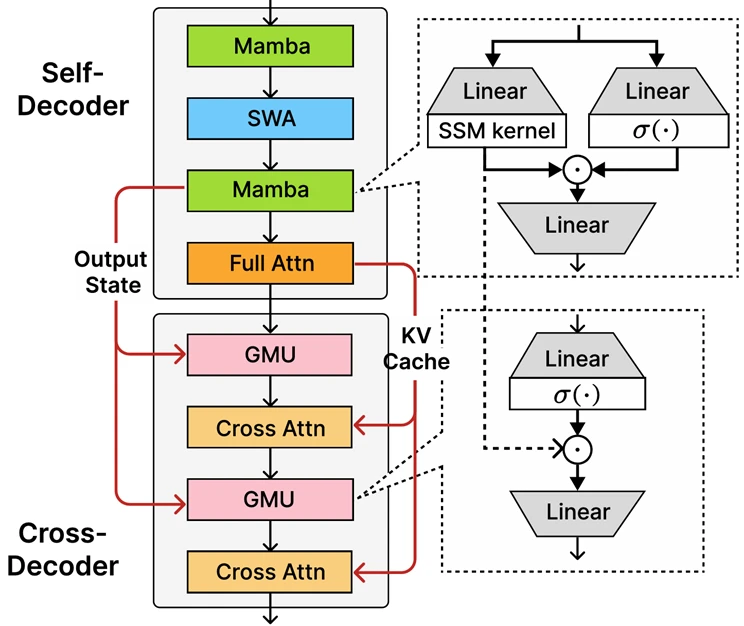

On the core of Phi-4-mini-flash-reasoning is the newly launched decoder-hybrid-decoder structure, SambaY, whose central innovation is the Gated Reminiscence Unit (GMU), a easy but efficient mechanism for sharing representations between layers. The structure features a self-decoder that mixes Mamba (a State House Mannequin) and Sliding Window Consideration (SWA), together with a single layer of full consideration. The structure additionally entails a cross-decoder that interleaves costly cross-attention layers with the brand new, environment friendly GMUs. This new structure with GMU modules drastically improves decoding effectivity, boosts long-context retrieval efficiency and allows the structure to ship distinctive efficiency throughout a variety of duties.

Key advantages of the SambaY structure embody:

- Enhanced decoding effectivity.

- Preserves linear prefiling time complexity.

- Elevated scalability and enhanced lengthy context efficiency.

- As much as 10 instances greater throughput.

Phi-4-mini-flash-reasoning benchmarks

Like all fashions within the Phi household, Phi-4-mini-flash-reasoning is deployable on a single GPU, making it accessible for a broad vary of use instances. Nonetheless, what units it aside is its architectural benefit. This new mannequin achieves considerably decrease latency and better throughput in comparison with Phi-4-mini-reasoning, notably in long-context technology and latency-sensitive reasoning duties.

This makes Phi-4-mini-flash-reasoning a compelling possibility for builders and enterprises trying to deploy clever methods that require quick, scalable, and environment friendly reasoning—whether or not on premises or on-device.

What are the potential use instances?

Due to its lowered latency, improved throughput, and deal with math reasoning, the mannequin is good for:

- Adaptive studying platforms, the place real-time suggestions loops are important.

- On-device reasoning assistants, similar to cellular examine aids or edge-based logic brokers.

- Interactive tutoring methods that dynamically regulate content material problem primarily based on a learner’s efficiency.

Its energy in math and structured reasoning makes it particularly worthwhile for schooling expertise, light-weight simulations, and automatic evaluation instruments that require dependable logic inference with quick response instances.

Builders are inspired to attach with friends and Microsoft engineers by means of the Microsoft Developer Discord neighborhood to ask questions, share suggestions, and discover real-world use instances collectively.

Microsoft’s dedication to reliable AI

Organizations throughout industries are leveraging Azure AI and Microsoft 365 Copilot capabilities to drive development, enhance productiveness, and create value-added experiences.

We’re dedicated to serving to organizations use and construct AI that’s reliable, which means it’s safe, non-public, and protected. We deliver finest practices and learnings from a long time of researching and constructing AI merchandise at scale to supply industry-leading commitments and capabilities that span our three pillars of safety, privateness, and security. Reliable AI is just potential if you mix our commitments, similar to our Safe Future Initiative and our accountable AI rules, with our product capabilities to unlock AI transformation with confidence.

Phi fashions are developed in accordance with Microsoft AI rules: accountability, transparency, equity, reliability and security, privateness and safety, and inclusiveness.

The Phi mannequin household, together with Phi-4-mini-flash-reasoning, employs a strong security post-training technique that integrates Supervised Wonderful-Tuning (SFT), Direct Choice Optimization (DPO), and Reinforcement Studying from Human Suggestions (RLHF). These methods are utilized utilizing a mix of open-source and proprietary datasets, with a powerful emphasis on guaranteeing helpfulness, minimizing dangerous outputs, and addressing a broad vary of security classes. Builders are inspired to use accountable AI finest practices tailor-made to their particular use instances and cultural contexts.

Learn the mannequin card to study extra about any danger and mitigation methods.

Be taught extra concerning the new mannequin

Create with Azure AI Foundry