TL;DR:

CIOs face mounting strain to undertake agentic AI — however skipping steps results in value overruns, compliance gaps, and complexity you may’t unwind. This publish outlines a wiser, staged path that will help you scale AI with management, readability, and confidence.

AI leaders are beneath immense strain to implement options which might be each cost-effective and safe. The problem lies not solely in adopting AI but in addition in retaining tempo with developments that may really feel overwhelming.

This typically results in the temptation to dive headfirst into the newest improvements to remain aggressive.

Nevertheless, leaping straight into complicated multi-agent techniques with no strong basis is akin to establishing the higher flooring of a constructing earlier than laying its base, leading to a construction that’s unstable and doubtlessly hazardous.

On this publish, we stroll via how one can information your group via every stage of agentic AI maturity — securely, effectively, and with out expensive missteps.

Understanding key AI ideas

Earlier than delving into the phases of AI maturity, it’s important to determine a transparent understanding of key ideas:

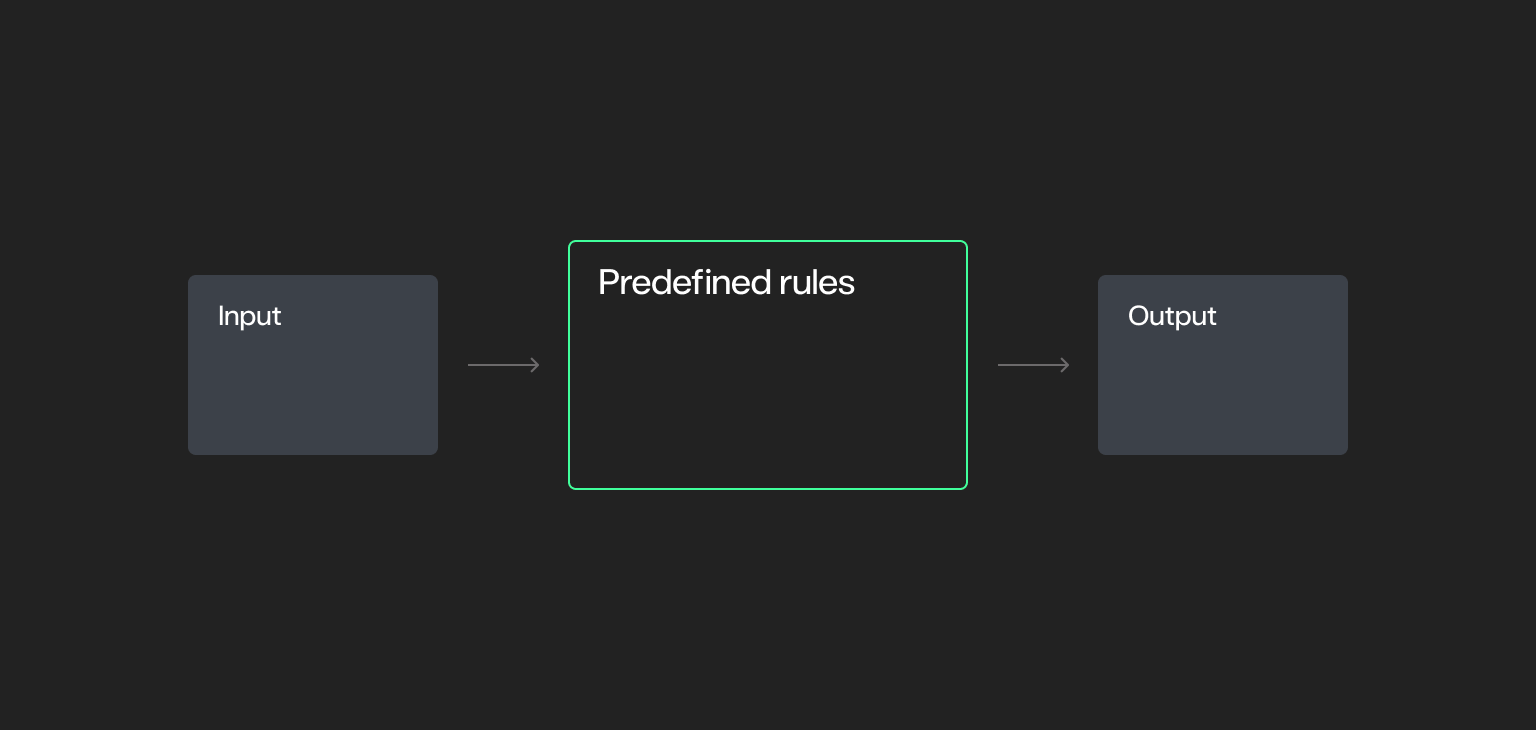

Deterministic techniques

Deterministic techniques are the foundational constructing blocks of automation.

- Comply with a set set of predefined guidelines the place the end result is totally predictable. Given the identical enter, the system will at all times produce the identical output.

- Doesn’t incorporate randomness or ambiguity.

- Whereas all deterministic techniques are rule-based, not all rule-based techniques are deterministic.

- Supreme for duties requiring consistency, traceability, and management.

- Examples: Primary automation scripts, legacy enterprise software program, and scheduled information switch processes.

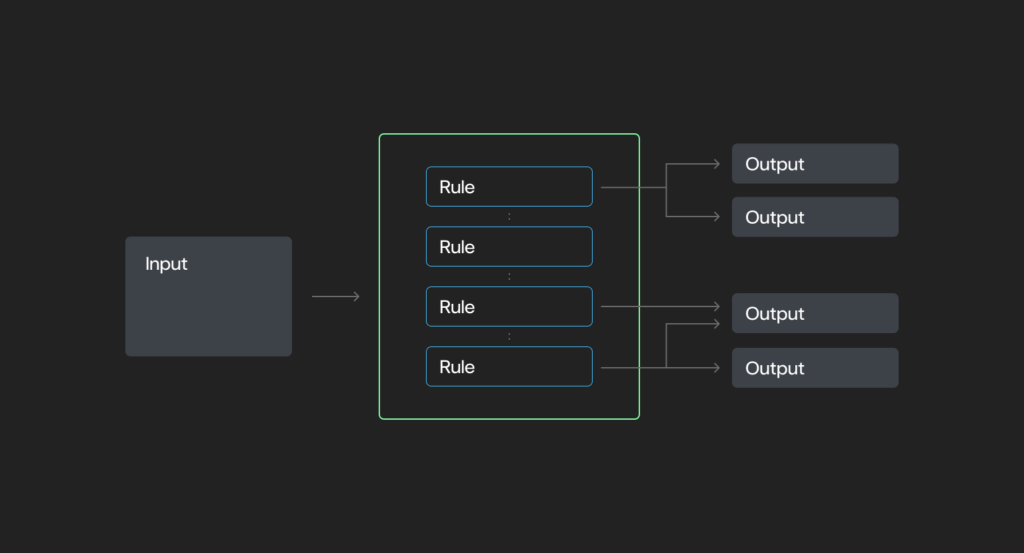

Rule-based techniques

A broader class that features deterministic techniques however may introduce variability (e.g., stochastic conduct).

- Function based mostly on a set of predefined situations and actions — “if X, then Y.”

- Could incorporate: deterministic techniques or stochastic components, relying on design.

- Highly effective for imposing construction.

- Lack autonomy or reasoning capabilities.

- Examples: Electronic mail filters, Robotic Course of Automation (RPA) ) and sophisticated infrastructure protocols like web routing.

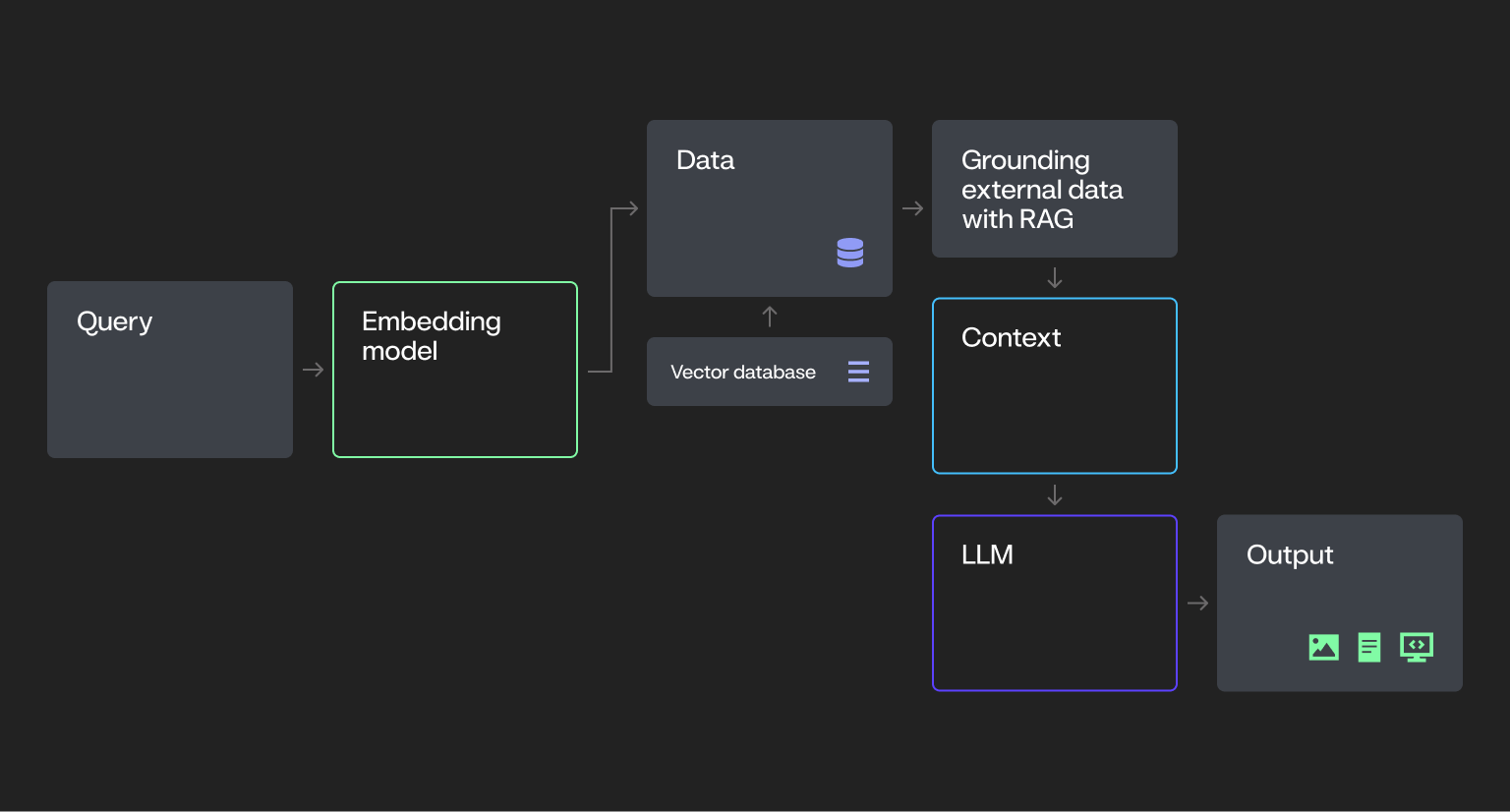

Course of AI

A step past rule-based techniques.

- Powered by Giant Language Fashions (LLMs) and Imaginative and prescient-Language Fashions (VLMs)

- Educated on intensive datasets to generate numerous content material (e.g., textual content, photos, code) in response to enter prompts.

- Responses are grounded in pre-trained data and will be enriched with exterior information through strategies like Retrieval-Augmented Era (RAG).

- Doesn’t make autonomous selections — operates solely when prompted.

- Examples: Generative AI chatbots, summarization instruments, and content-generation purposes powered by LLMs.

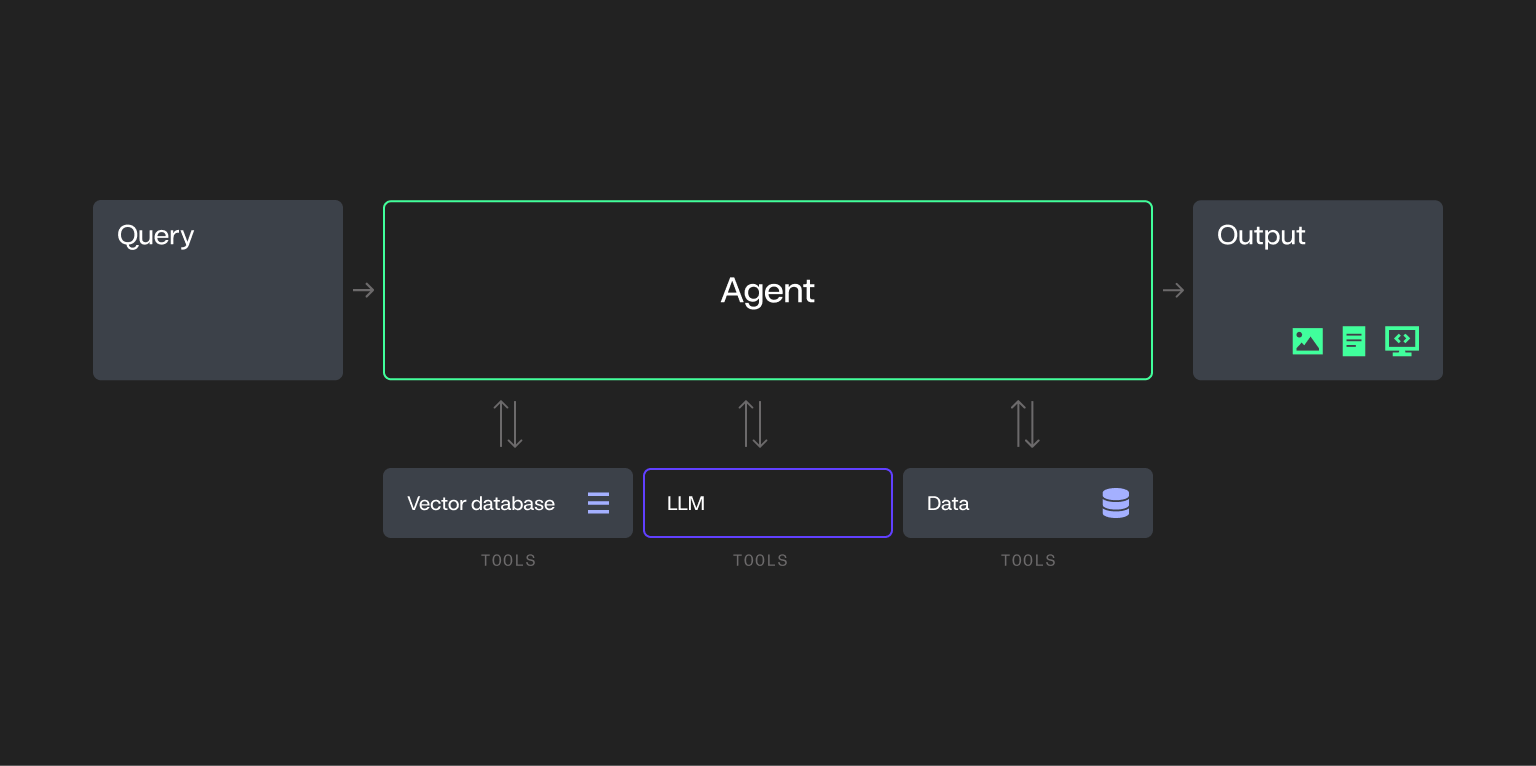

Single-agent techniques

Introduce autonomy, planning, and power utilization, elevating foundational AI into extra complicated territory.

- AI-driven packages designed to carry out particular duties independently.

- Can combine with exterior instruments and techniques (e.g., databases or APIs) to finish duties.

- Don’t collaborate with different brokers — function alone inside a job framework.

- To not be confused with RPA: RPA is good for extremely standardized, rules-based duties the place logic doesn’t require reasoning or adaptation.

- Examples: AI-driven assistants for forecasting, monitoring, or automated job execution that function independently.

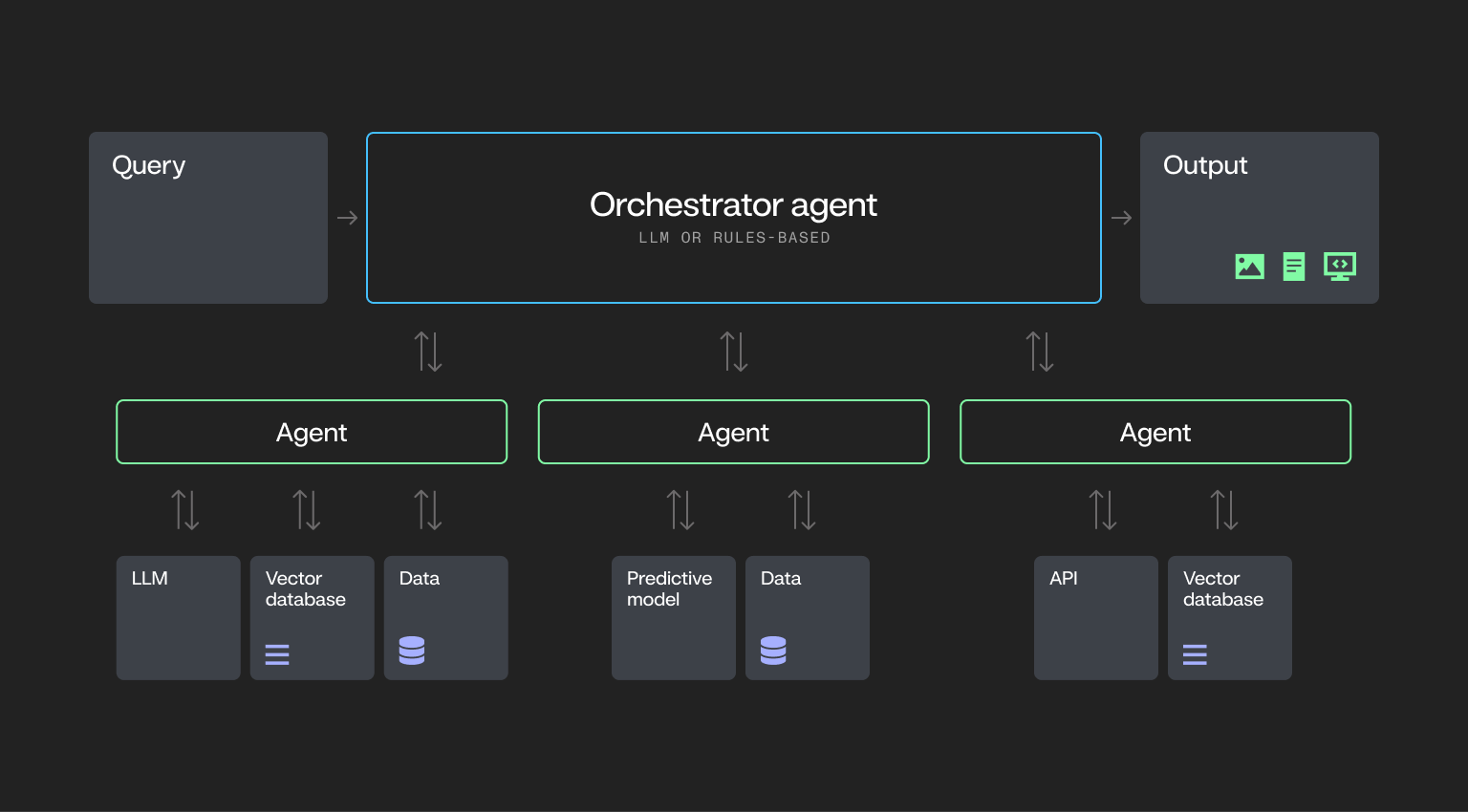

Multi-agent techniques

Probably the most superior stage, that includes distributed decision-making, autonomous coordination, and dynamic workflows.

- Comprised of a number of AI brokers that work together and collaborate to realize complicated goals.

- Brokers dynamically resolve which instruments to make use of, when, and in what sequence.

- Capabilities embody planning, reflection, reminiscence utilization, and cross-agent collaboration.

- Examples: Distributed AI techniques coordinating throughout departments like provide chain, customer support, or fraud detection.

What makes an AI system really agentic?

To be thought-about really agentic, an AI system usually demonstrates core capabilities that allow it to function with autonomy and flexibility:

- Planning. The system can break down a job into steps and create a plan of execution.

- Software calling. The AI selects and makes use of instruments (e.g., fashions, capabilities) and initiates API calls to work together with exterior techniques to finish duties.

- Adaptability. The system can modify its actions in response to altering inputs or environments, making certain efficient efficiency throughout various contexts.

- Reminiscence. The system retains related info throughout steps or classes.

These traits align with extensively accepted definitions of agentic AI, together with frameworks mentioned by AI leaders comparable to Andrew Ng.

With these definitions in thoughts, let’s discover the phases required to progress towards implementing multi-agent techniques.

Understanding agentic AI maturity phases

For the needs of simplicity, we’ve delineated the trail to extra complicated agentic flows into three phases. Every stage presents distinctive challenges and alternatives regarding value, safety, and governance.

Stage 1: Course of AI

What this stage seems to be like

Within the Course of AI stage, organizations usually pilot generative AI via remoted use instances like chatbots, doc summarization, or inside Q&A. These efforts are sometimes led by innovation groups or particular person enterprise models, with restricted involvement from IT.

Deployments are constructed round a single LLM and function outdoors core techniques like ERP or CRM, making integration and oversight troublesome.

Infrastructure is commonly pieced collectively, governance is casual, and safety measures could also be inconsistent.

Provide chain instance for course of AI

Within the Course of AI stage, a provide chain workforce would possibly use a generative AI-powered chatbot to summarize cargo information or reply fundamental vendor queries based mostly on inside paperwork. This software can pull in information via a RAG workflow to supply insights, nevertheless it doesn’t take any motion autonomously.

For instance, the chatbot may summarize stock ranges, predict demand based mostly on historic traits, and generate a report for the workforce to assessment. Nevertheless, the workforce should then resolve what motion to take (e.g., place restock orders or modify provide ranges).

The system merely gives insights — it doesn’t make selections or take actions.

Frequent obstacles

Whereas early AI initiatives can present promise, they typically create operational blind spots that stall progress, drive up prices, and improve threat if left unaddressed.

- Information integration and high quality. Most organizations battle to unify information throughout disconnected techniques, limiting the reliability and relevance of generative AI output.

- Scalability challenges. Pilot tasks typically stall when groups lack the infrastructure, entry, or technique to maneuver from proof of idea to manufacturing.

- Insufficient testing and stakeholder alignment. Generative outputs are steadily launched with out rigorous QA or enterprise consumer acceptance, resulting in belief and adoption points.

- Change administration friction. As generative AI reshapes roles and workflows, poor communication and planning can create organizational resistance.

- Lack of visibility and traceability. With out mannequin monitoring or auditability, it’s obscure how selections are made or pinpoint the place errors happen.

- Bias and equity dangers. Generative fashions can reinforce or amplify bias in coaching information, creating reputational, moral, or compliance dangers.

- Moral and accountability gaps. AI-generated content material can blur moral traces or be misused, elevating questions round duty and management.

- Regulatory complexity. Evolving international and industry-specific rules make it troublesome to make sure ongoing compliance at scale.

Software and infrastructure necessities

Earlier than advancing to extra autonomous techniques, organizations should guarantee their infrastructure is provided to help safe, scalable, and cost-effective AI deployment.

- Quick, versatile vector database updates to handle embeddings as new information turns into accessible.

- Scalable information storage to help massive datasets used for coaching, enrichment, and experimentation.

- Ample compute assets (CPUs/GPUs) to energy coaching, tuning, and working fashions at scale.

- Safety frameworks with enterprise-grade entry controls, encryption, and monitoring to guard delicate information.

- Multi-model flexibility to check and consider completely different LLMs and decide the perfect match for particular use instances.

- Benchmarking instruments to visualise and evaluate mannequin efficiency throughout assessments and testing.

- Lifelike, domain-specific information to check responses, simulate edge instances, and validate outputs.

- A QA prototyping surroundings that helps fast setup, consumer acceptance testing, and iterative suggestions.

- Embedded safety, AI, and enterprise logic for consistency, guardrails, and alignment with organizational requirements.

- Actual-time intervention and moderation instruments for IT and safety groups to watch and management AI outputs in actual time.

- Sturdy information integration capabilities to attach sources throughout the group and guarantee high-quality inputs.

- Elastic infrastructure to scale with demand with out compromising efficiency or availability.

- Compliance and audit tooling that permits documentation, change monitoring, and regulatory adherence.

Making ready for the subsequent stage

To construct on early generative AI efforts and put together for extra autonomous techniques, organizations should lay a strong operational and organizational basis.

- Spend money on AI-ready information. It doesn’t should be excellent, nevertheless it have to be accessible, structured, and safe to help future workflows.

- Use vector database visualizations. This helps groups determine data gaps and validate the relevance of generative responses.

- Apply business-driven QA/UAT. Prioritize acceptance testing with the top customers who will depend on generative output, not simply technical groups.

- Rise up a safe AI registry. Monitor mannequin variations, prompts, outputs, and utilization throughout the group to allow traceability and auditing.

- Implement baseline governance. Set up foundational frameworks like role-based entry management (RBAC), approval flows, and information lineage monitoring.

- Create repeatable workflows. Standardize the AI growth course of to maneuver past one-off experimentation and allow scalable output.

- Construct traceability into generative AI utilization. Guarantee transparency round information sources, immediate development, output high quality, and consumer exercise.

- Mitigate bias early. Use numerous, consultant datasets and frequently audit mannequin outputs to determine and tackle equity dangers.

- Collect structured suggestions. Set up suggestions loops with finish customers to catch high quality points, information enhancements, and refine use instances.

- Encourage cross-functional oversight. Contain authorized, compliance, information science, and enterprise stakeholders to information technique and guarantee alignment.

Key takeaways

Course of AI is the place most organizations start — nevertheless it’s additionally the place many get caught. With out robust information foundations, clear governance, and scalable workflows, early experiments can introduce extra threat than worth.

To maneuver ahead, CIOs have to shift from exploratory use instances to enterprise-ready techniques — with the infrastructure, oversight, and cross-functional alignment required to help secure, safe, and cost-effective AI adoption at scale.

Stage 2: Single-agent techniques

What this stage seems to be like

At this stage, organizations start tapping into true agentic AI — deploying single-agent techniques that may act independently to finish duties. These brokers are able to planning, reasoning, and calling instruments like APIs or databases to get work performed with out human involvement.

Not like earlier generative techniques that watch for prompts, single-agent techniques can resolve when and how one can act inside an outlined scope.

This marks a transparent step into autonomous operations—and a important inflection level in a company’s AI maturity.

Provide chain instance for single-agent techniques

Let’s revisit the provision chain instance. With a single-agent system in place, the workforce can now autonomously handle stock. The system screens real-time inventory ranges throughout regional warehouses, forecasts demand utilizing historic traits, and locations restock orders robotically through an built-in procurement API—with out human enter.

Not like the method AI stage, the place a chatbot solely summarizes information or solutions queries based mostly on prompts, the single-agent system acts autonomously. It makes selections, adjusts stock, and locations orders inside a predefined workflow.

Nevertheless, as a result of the agent is making unbiased selections, any errors in configuration or missed edge instances (e.g., surprising demand spikes) may lead to points like stockouts, overordering, or pointless prices.

This can be a important shift. It’s not nearly offering info anymore; it’s concerning the system making selections and executing actions, making governance, monitoring, and guardrails extra essential than ever.

Frequent obstacles

As single-agent techniques unlock extra superior automation, many organizations run into sensible roadblocks that make scaling troublesome.

- Legacy integration challenges. Many single-agent techniques battle to attach with outdated architectures and information codecs, making integration technically complicated and resource-intensive.

- Latency and efficiency points. As brokers carry out extra complicated duties, delays in processing or software calls can degrade consumer expertise and system reliability.

- Evolving compliance necessities. Rising rules and moral requirements introduce uncertainty. With out sturdy governance frameworks, staying compliant turns into a transferring goal.

- Compute and expertise calls for. Working agentic techniques requires vital infrastructure and specialised expertise, placing strain on budgets and headcount planning.

- Software fragmentation and vendor lock-in. The nascent agentic AI panorama makes it onerous to decide on the best tooling. Committing to a single vendor too early can restrict flexibility and drive up long-term prices.

- Traceability and power name visibility. Many organizations lack the required stage of observability and granular intervention required for these techniques. With out detailed traceability and the power to intervene at a granular stage, techniques can simply run amok, resulting in unpredictable outcomes and elevated threat.

Software and infrastructure necessities

At this stage, your infrastructure must do extra than simply help experimentation—it must maintain brokers linked, working easily, and working securely at scale.

- Integration platform with instruments that facilitate seamless connectivity between the AI agent and your core enterprise techniques, making certain clean information move throughout environments.

- Monitoring techniques designed to trace and analyze the agent’s efficiency and outcomes, flag points, and floor insights for ongoing enchancment.

- Compliance administration instruments that assist implement AI insurance policies and adapt rapidly to evolving regulatory necessities.

- Scalable, dependable storage to deal with the rising quantity of information generated and exchanged by AI brokers.

- Constant compute entry to maintain brokers performing effectively beneath fluctuating workloads.

- Layered safety controls that defend information, handle entry, and keep belief as brokers function throughout techniques.

- Dynamic intervention and moderation that may perceive processes aren’t adhering to insurance policies, intervene in real-time and ship alerts for human intervention.

Making ready for the subsequent stage

Earlier than layering on extra brokers, organizations have to take inventory of what’s working, the place the gaps are, and how one can strengthen coordination, visibility, and management at scale.

- Consider present brokers. Establish efficiency limitations, system dependencies, and alternatives to enhance or broaden automation.

- Construct coordination frameworks. Set up techniques that may help seamless interplay and task-sharing between future brokers.

- Strengthen observability. Implement monitoring instruments that present real-time insights into agent conduct, outputs, and failures on the software stage and the agent stage.

- Interact cross-functional groups. Align AI objectives and threat administration methods throughout IT, authorized, compliance, and enterprise models.

- Embed automated coverage enforcement. Construct in mechanisms that uphold safety requirements and help regulatory compliance as agent techniques broaden.

Key takeaways

Single-agent techniques supply vital functionality by enabling autonomous actions that improve operational effectivity. Nevertheless, they typically include increased prices in comparison with non-agentic RAG workflows, like these within the course of AI stage, in addition to elevated latency and variability in response occasions.

Since these brokers make selections and take actions on their very own, they require tight integration, cautious governance, and full traceability.

If foundational controls like observability, governance, safety, and auditability aren’t firmly established within the course of AI stage, these gaps will solely widen, exposing the group to better dangers round value, compliance, and model status.

Stage 3: Multi-agent techniques

What this stage seems to be like

On this stage, a number of AI brokers work collectively — every with its personal job, instruments, and logic — to realize shared objectives with minimal human involvement. These brokers function autonomously, however additionally they coordinate, share info, and modify their actions based mostly on what others are doing.

Not like single-agent techniques, selections aren’t made in isolation. Every agent acts based mostly by itself observations and context, contributing to a system that behaves extra like a workforce, planning, delegating, and adapting in actual time.

This type of distributed intelligence unlocks highly effective use instances and large scale. However as one can think about, it additionally introduces vital operational complexity: overlapping selections, system interdependencies, and the potential for cascading failures if brokers fall out of sync.

Getting this proper calls for robust structure, real-time observability, and tight controls.

Provide chain instance for multi-agent techniques

In earlier phases, a chatbot was used to summarize shipments and a single-agent system was deployed to automate stock restocking.

On this instance, a community of AI brokers are deployed, every specializing in a unique a part of the operation, from forecasting and video evaluation to scheduling and logistics.

When an surprising cargo quantity is forecasted, brokers kick into motion:

- A forecasting agent tasks capability wants.

- A pc imaginative and prescient agent analyzes reside warehouse footage to search out underutilized house.

- A delay prediction agent faucets time sequence information to anticipate late arrivals.

These brokers talk and coordinate in actual time, adjusting workflows, updating the warehouse supervisor, and even triggering downstream adjustments like rescheduling vendor pickups.

This stage of autonomy unlocks velocity and scale that handbook processes can’t match. Nevertheless it additionally means one defective agent — or a breakdown in communication — can ripple throughout the system.

At this stage, visibility, traceability, intervention, and guardrails turn into non-negotiable.

Frequent obstacles

The shift to multi-agent techniques isn’t only a step up in functionality — it’s a leap in complexity. Every new agent added to the system introduces new variables, new interdependencies, and new methods for issues to interrupt in case your foundations aren’t strong.

- Escalating infrastructure and operational prices. Working multi-agent techniques is pricey—particularly as every agent drives extra API calls, orchestration layers, and real-time compute calls for. Prices compound rapidly throughout a number of fronts:

- Specialised tooling and licenses. Constructing and managing agentic workflows typically requires area of interest instruments or frameworks, growing prices and limiting flexibility.

- Useful resource-intensive compute. Multi-agent techniques demand high-performance {hardware}, like GPUs, which might be expensive to scale and troublesome to handle effectively.

- Scaling the workforce. Multi-agent techniques require area of interest experience throughout AI, MLOps, and infrastructure — typically including headcount and growing payroll prices in an already aggressive expertise market.

- Operational overhead. Even autonomous techniques want hands-on help. Standing up and sustaining multi-agent workflows typically requires vital handbook effort from IT and infrastructure groups, particularly throughout deployment, integration, and ongoing monitoring.

- Deployment sprawl. Managing brokers throughout cloud, edge, desktop, and cellular environments introduces considerably extra complexity than predictive AI, which usually depends on a single endpoint. Compared, multi-agent techniques typically require 5x the coordination, infrastructure, and help to deploy and keep.

- Misaligned brokers. With out robust coordination, brokers can take conflicting actions, duplicate work, or pursue objectives out of sync with enterprise priorities.

- Safety floor enlargement. Every extra agent introduces a brand new potential vulnerability, making it tougher to guard techniques and information end-to-end.

- Vendor and tooling lock-in. Rising ecosystems can result in heavy dependence on a single supplier, making future adjustments expensive and disruptive.

- Cloud constraints. When multi-agent workloads are tied to a single supplier, organizations threat working into compute throttling, burst limits, or regional capability points—particularly as demand turns into much less predictable and tougher to regulate.

- Autonomy with out oversight. Brokers could exploit loopholes or behave unpredictably if not tightly ruled, creating dangers which might be onerous to comprise in actual time.

- Dynamic useful resource allocation. Multi-agent workflows typically require infrastructure that may reallocate compute (e.g., GPUs, CPUs) in actual time—including new layers of complexity and value to useful resource administration.

- Mannequin orchestration complexity. Coordinating brokers that depend on numerous fashions or reasoning methods introduces integration overhead and will increase the chance of failure throughout workflows.

- Fragmented observability. Tracing selections, debugging failures, or figuring out bottlenecks turns into exponentially tougher as agent depend and autonomy develop.

- No clear “performed.” With out robust job verification and output validation, brokers can drift off-course, fail silently, or burn pointless compute.

Software and infrastructure necessities

As soon as brokers begin making selections and coordinating with one another, your techniques have to do extra than simply sustain — they should keep in management. These are the core capabilities to have in place earlier than scaling multi-agent workflows in manufacturing.

- Elastic compute assets. Scalable entry to GPUs, CPUs, and high-performance infrastructure that may be dynamically reallocated to help intensive agentic workloads in actual time.

- Multi-LLM entry and routing. Flexibility to check, evaluate, and route duties throughout completely different LLMs to regulate prices and optimize efficiency by use case.

- Autonomous system safeguards. Constructed-in safety frameworks that forestall misuse, defend information integrity, and implement compliance throughout distributed agent actions.

- Agent orchestration layer. Workflow orchestration instruments that coordinate job delegation, software utilization, and communication between brokers at scale.

- Interoperable platform structure. Open techniques that help integration with numerous instruments and applied sciences, serving to you keep away from lock-in and enabling long-term flexibility.

- Finish-to-end dynamic observability and intervention. Monitoring, moderation, and traceability instruments that not solely floor agent conduct, detect anomalies, and help real-time intervention, but in addition adapt as brokers evolve. These instruments can determine when brokers try to use loopholes or create new ones, triggering alerts or halting processes to re-engage human oversight

Making ready for the subsequent stage

There’s no playbook for what comes after multi-agent techniques, however organizations that put together now would be the ones shaping what comes subsequent. Constructing a versatile, resilient basis is one of the simplest ways to remain forward of fast-moving capabilities, shifting rules, and evolving dangers.

- Allow dynamic useful resource allocation. Infrastructure ought to help real-time reallocation of GPUs, CPUs, and compute capability as agent workflows evolve.

- Implement granular observability. Use superior monitoring and alerting instruments to detect anomalies and hint agent conduct on the most detailed stage.

- Prioritize interoperability and suppleness. Select instruments and platforms that combine simply with different techniques and help hot-swapping elements and streamlined CI/CD workflows so that you’re not locked into one vendor or tech stack.

- Construct multi-cloud fluency. Guarantee your groups can work throughout cloud platforms to distribute workloads effectively, scale back bottlenecks, keep away from provider-specific limitations, and help long-term flexibility.

- Centralize AI asset administration. Use a unified registry to control entry, deployment, and versioning of all AI instruments and brokers.

- Evolve safety along with your brokers. Implement adaptive, context-aware safety protocols that reply to rising threats in actual time.

- Prioritize traceability. Guarantee all agent selections are logged, explainable, and auditable to help investigation and steady enchancment.

- Keep present with instruments and techniques. Construct techniques and workflows that may repeatedly check and combine new fashions, prompts, and information sources.

Key takeaways

Multi-agent techniques promise scale, however with out the best basis, they’ll amplify your issues, not remedy them.

As brokers multiply and selections turn into extra distributed, even small gaps in governance, integration, or safety can cascade into expensive failures.

AI leaders who succeed at this stage gained’t be those chasing the flashiest demos—they’ll be those who deliberate for complexity earlier than it arrived.

Advancing to agentic AI with out shedding management

AI maturity doesn’t occur unexpectedly. Every stage — from early experiments to multi-agent techniques— brings new worth, but in addition new complexity. The important thing isn’t to hurry ahead. It’s to maneuver with intention, constructing on robust foundations at each step.

For AI leaders, this implies scaling AI in methods which might be cost-effective, well-governed, and resilient to vary.

You don’t should do every part proper now, however the selections you make now form how far you’ll go.

Wish to evolve via your AI maturity safely and effectively? Request a demo to see how our Agentic AI Apps Platform ensures safe, cost-effective progress at every stage.

In regards to the creator

Lisa Aguilar is VP of Product Advertising and marketing and Discipline CTOs at DataRobot, the place she is answerable for constructing and executing the go-to-market technique for his or her AI-driven forecasting product line. As a part of her function, she companions intently with the product administration and growth groups to determine key options that may tackle the wants of shops, producers, and monetary service suppliers with AI. Previous to DataRobot, Lisa was at ThoughtSpot, the chief in Search and AI-Pushed Analytics.

Dr. Ramyanshu (Romi) Datta is the Vice President of Product for AI Platform at DataRobot, answerable for capabilities that allow orchestration and lifecycle administration of AI Brokers and Purposes. Beforehand he was at AWS, main product administration for AWS’ AI Platforms – Amazon Bedrock Core Methods and Generative AI on Amazon SageMaker. He was additionally GM for AWS’s Human-in-the-Loop AI providers. Previous to AWS, Dr. Datta has additionally held engineering and product roles at IBM and Nvidia. He acquired his M.S. and Ph.D. levels in Laptop Engineering from the College of Texas at Austin, and his MBA from College of Chicago Sales space Faculty of Enterprise. He’s a co-inventor of 25+ patents on topics starting from Synthetic Intelligence, Cloud Computing & Storage to Excessive-Efficiency Semiconductor Design and Testing.

Dr. Debadeepta Dey is a Distinguished Researcher at DataRobot, the place he leads dual-purpose strategic analysis initiatives. These initiatives give attention to advancing the elemental state-of-the-art in Deep Studying and Generative AI, whereas additionally fixing pervasive issues confronted by DataRobot’s clients, with the purpose of enabling them to derive worth from AI. He accomplished his PhD in AI and Robotics from The Robotics Institute, Carnegie Mellon College in 2015. From 2015 to 2024, he was a researcher at Microsoft Analysis. His major analysis pursuits embody Reinforcement Studying, AutoML, Neural Structure Search, and high-dimensional planning. He frequently serves as Space Chair at ICML, NeurIPS, and ICLR, and has printed over 30 papers in top-tier AI and Robotics journals and conferences. His work has been acknowledged with a Greatest Paper of the 12 months Shortlist nomination on the Worldwide Journal of Robotics Analysis.